WAYFINDING BRACELET

Overview

In collaboration with Vidia Anindhita, Wayfinding Bracelet is a navigation accessory that translates turn by turn directions into haptic vibrations and non-intrusive audio feedback to safely guide visually-impaired users to their desired destination.

The Problem

For people with visual impairments or blindness, wayfinding, or the process of navigating unfamiliar spaces to reach a destination, is a complex and intimidating task. Although mobile phones now come preinstalled with screen readers (ie. iPhone VoiceOver and Android’s TalkBack), Maps and similar GPS navigation apps are not user-friendly.

People with visual impairments or blindness rely heavily on multisensory feedback from their environment to navigate spaces, but the screen reader tends to overpower all other senses and can be very overwhelming for them to concentrate on what steps to take next. This puts them in a distressed and vulnerable state, especially when others in the surrounding environment are also distracted.

Context & Research

Context & Research

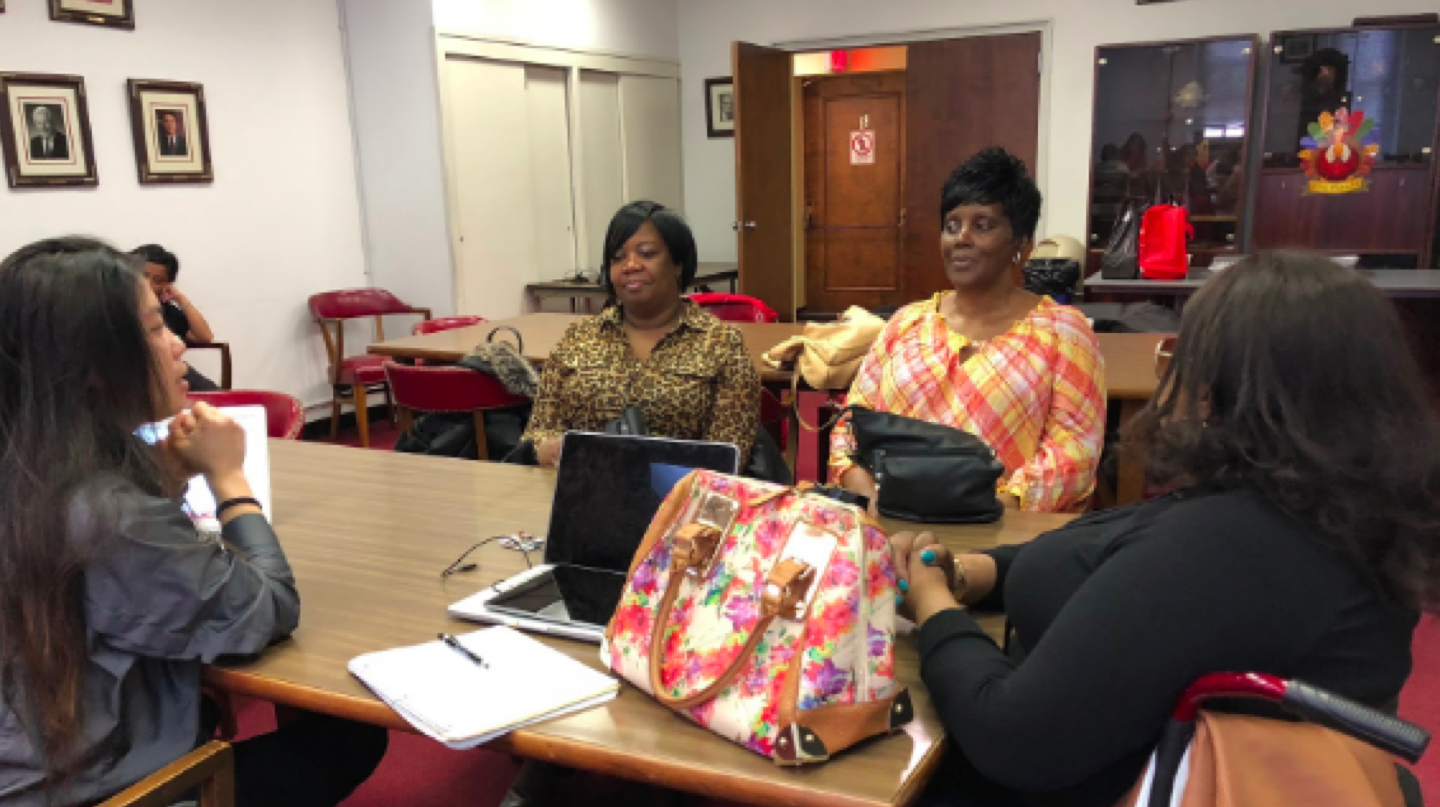

We saw an opportunity to provide people with visual impairments or blindness with wearable technology to help them navigate safely without overwhelming their senses. After analyzing existing wearable wayfinding systems, we scheduled one-on-one interviews with visually-impaired persons at Andrew Heiskell Braille and Talking Book Library and Helen Keller National Center in New York to gain insight on how they get around, specifically what works well and what needs to be improved.

Concept Ideation

From our research we realized the importance of designing the wayfinding experience around principles of calm technology, a type of information technology where the interaction between the technology and its user is designed to occur in his/her periphery rather than constantly at the center of attention.

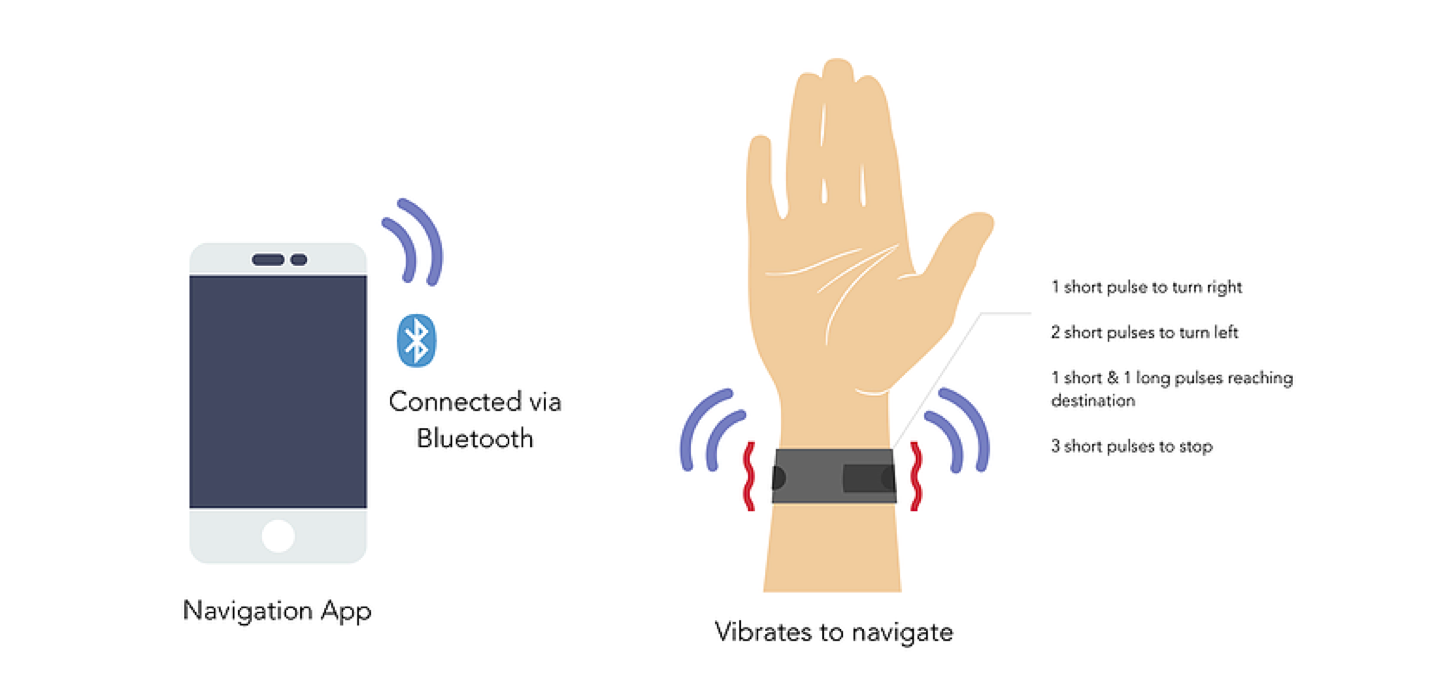

We proposed a navigation mobile app that synchronizes with a wearable device to translate directional cues into a combination of haptic vibrations and non-intrusive audio feedback to guide users to their destination.

We chose haptic feedback because it is invisible and intimate, and if implemented correctly, can register as sensory memory and even shape habits. We dissected the wayfinding process to 5 steps and mapped vibrations and audio feedback to each.

Step 1: Orienting oneself in the environment

Step 2: Choosing the route

Step 3: Keeping on track

Step 4: Identification of surrounding landmarks

Step 5: Recognizing destination has been reached

Technology

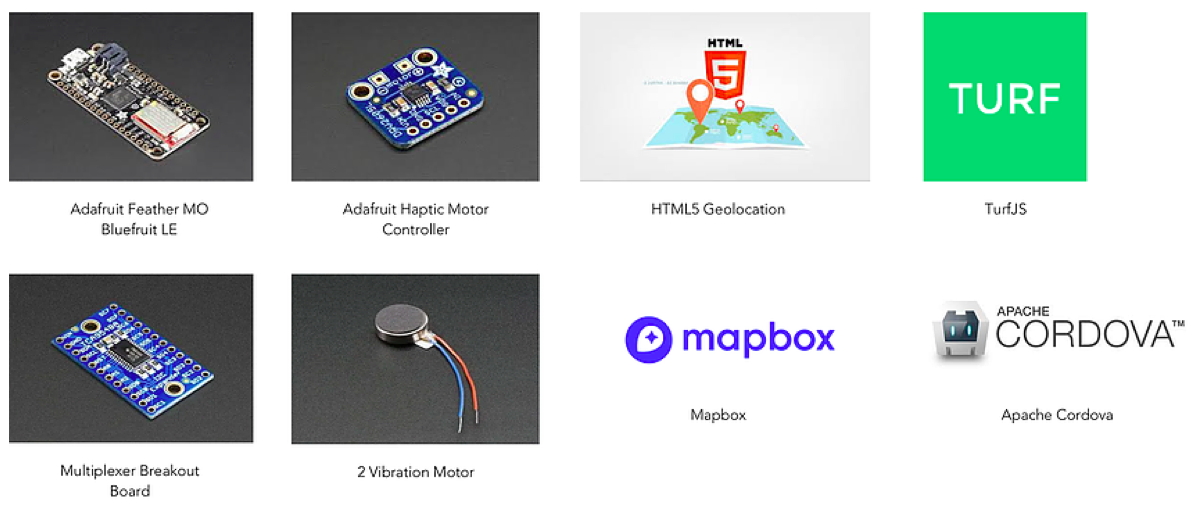

We used the following components to develop a working prototype that transmits location data through bluetooth technology.

User Testing

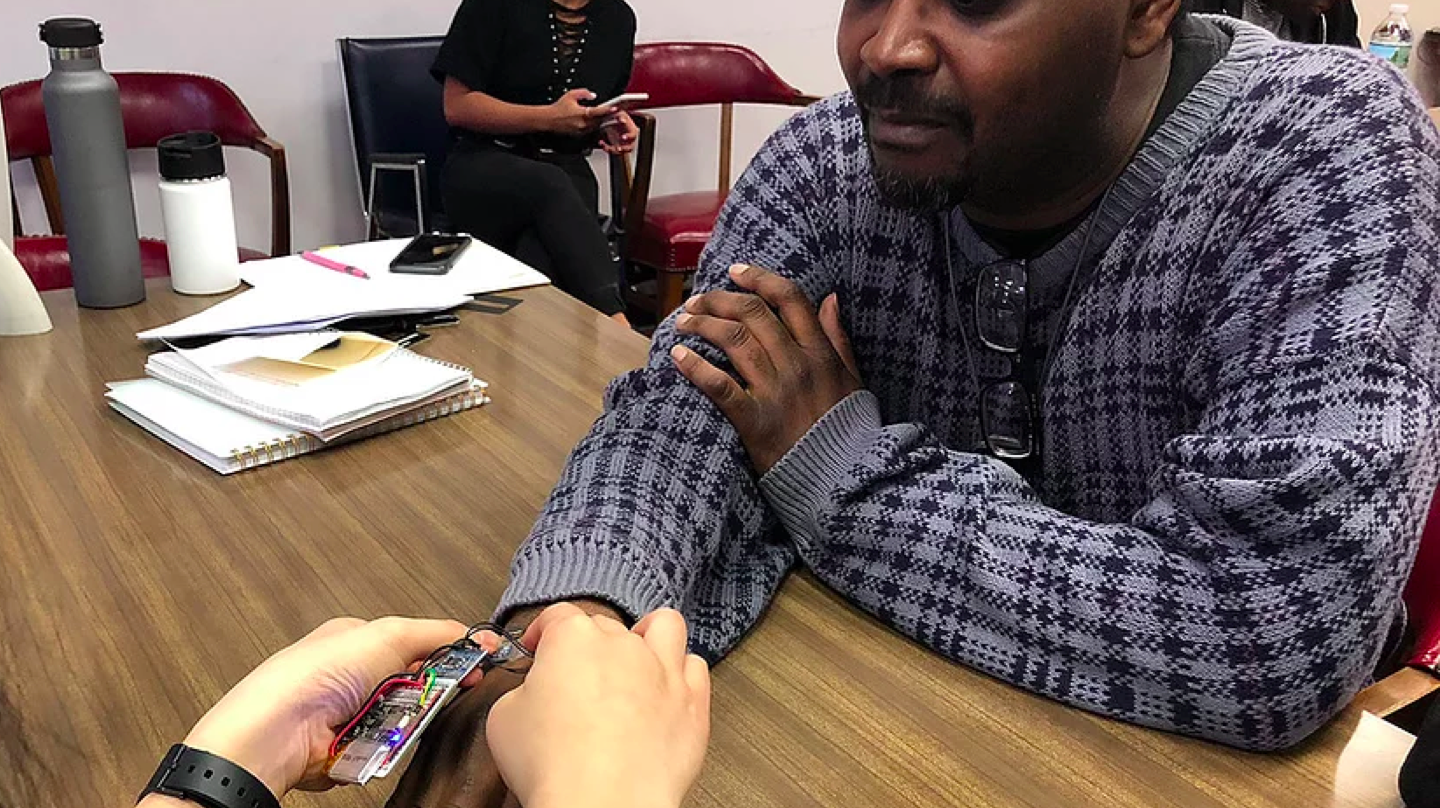

We were excited to user test our first proof of concept when it was finally completed.

The feedback we received from user testing was very positive. Our users requested stronger vibrations, indication at intersections, reliable accuracy, long battery life, and customizable designs at an accessible price point.

Next Steps

This was an incredibly rewarding project to take part in and we want to continue to refine our proof of concept for future development.

Our goal is not only to design something that is intuitive to learn and use, but to bring fashion perspectives to both the practice and culture of inclusive design. When eyeglasses are not an option, we believe there needs to be accessories for visually-impaired people that are just as functional, stylish, and ubiquitous.